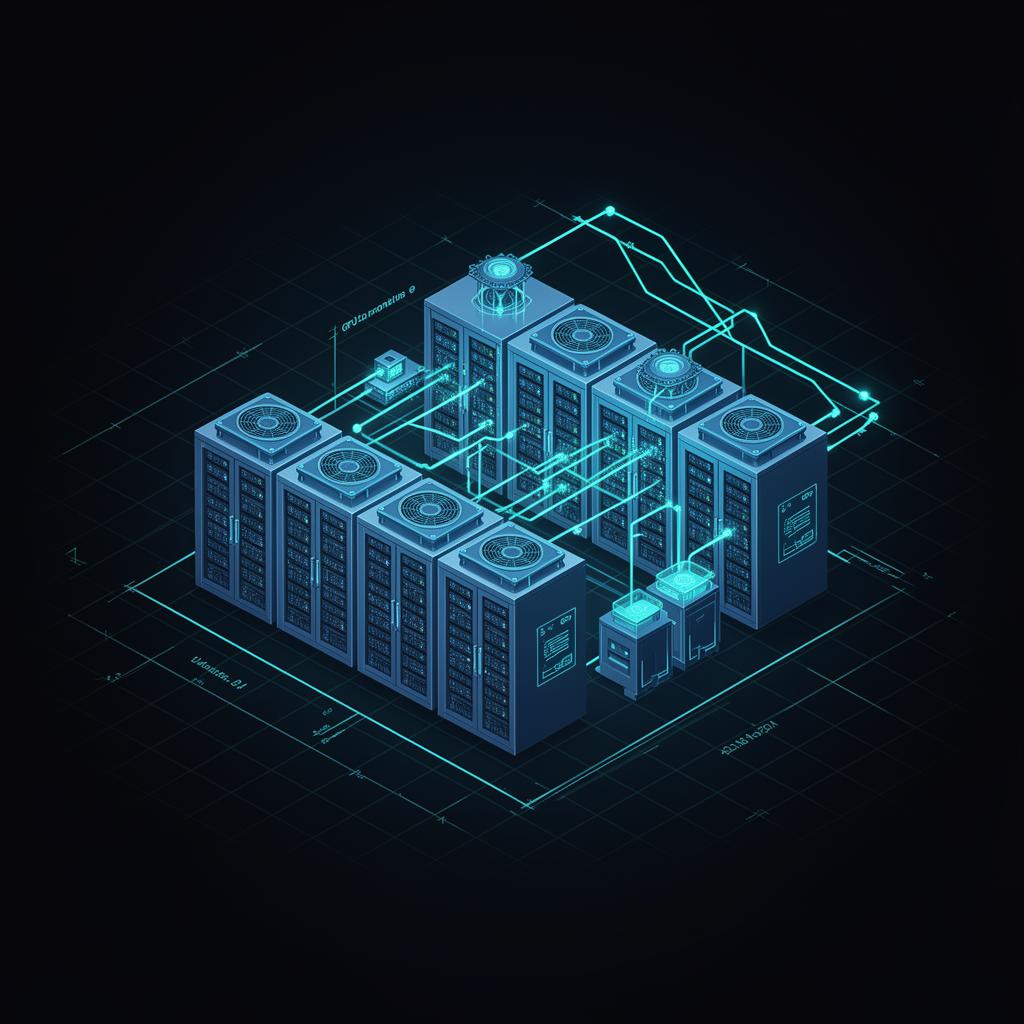

Hyperscale data centers, designed in minutes.

Plan AI/GPU clusters, multi-region cloud, hybrid, edge, and HPC architectures. Generate implementation-ready HLDs, LLDs, network topologies, runbooks, and infra-as-code from a single brief.

One platform. Every layer of your stack.

Architects, SREs, and infra leads use Datacenter Architect to go from white-board to vendor-ready designs in a fraction of the time.

AI Architect Copilot

Stream implementation-ready HLDs, LLDs, network topologies, Terraform & K8s manifests from a single brief.

GPU Sizer

Right-size GPU clusters for training & inference. Power, cooling, and rack-density calculations included.

Site Selection

Compare regions on PUE, water, carbon, latency, and capex — backed by real industry datasets.

Blast Radius Analysis

Simulate failures across racks, rows, AZs, and regions. Find SPOFs before production does.

Low-Level Design Export

Generate vendor-ready cable schedules, BoMs, and device configs. Export to PDF, Excel, JSON.

Scenario Compare

Save and diff design alternatives side-by-side. Tag, comment, and share with stakeholders.

From rack-level topology to multi-region fabric.

Model GPU clusters, leaf-spine networks, cooling loops, and power distribution as a single living blueprint. Every line, port, and PDU is traceable — from the brief to the BoM.

- Spine-leaf, fat-tree & dragonfly topologies

- Liquid & immersion cooling models

- N+1 / 2N power redundancy planning

- PUE, WUE & carbon-aware siting

Every kind of data center design.

From a single on-prem hall to a 100,000-GPU AI superpod — explore the architecture patterns we generate, compare, and ship.

Traditional

Owned hardware in a private facility. Predictable performance and full control over every layer — from PDUs to hypervisors.

- Tier III/IV redundancy (N+1, 2N power)

- In-row CRAC cooling, hot/cold aisle

- Dedicated MPLS / dark fiber uplinks

Two workloads. Two very different blueprints.

Training and inference share GPUs but almost nothing else. Toggle between the two to see how fabric, cooling, and runtime stacks diverge.

Training cluster

Massive synchronous compute for foundation-model training

- Scale-up domain: 72-GPU NVL

GB200 NVL72 racks form a single coherent NVLink domain — ideal for tensor & pipeline parallelism on >70B param models.

- 400/800G non-blocking fabric

Rail-optimized fat-tree InfiniBand or RoCEv2 spine-leaf with full bisection bandwidth across thousands of GPUs.

- Direct-to-chip liquid cooling

1.0–1.2 MW per rack at 1200W TDP/GPU. Rear-door heat exchangers and CDUs target sub-1.15 PUE.

- Parallel storage tier

Lustre / WekaFS / VAST delivering >1 TB/s aggregate to feed checkpoints, datasets, and resumable jobs.

How it works

Three steps from idea to deployable design.

Describe your workload

Use case, scale, region, latency targets — natural language.

Stream a full design

AI produces HLD, LLD, topology, configs, runbooks.

Iterate & export

Refine via chat, compare scenarios, export to your stack.

Ready to design your next data center?

Sign up free and use our suite of architect tools — no credit card required to start.